Generative AI features carry risk: they can mislead users or produce inaccurate information. Zendesk needed a framework for communicating this risk clearly and consistently — to comply with new laws like the EU AI Act, protect our customers, and reduce risk for our business.

Develop an easy-to-use resource to help product designers quickly determine where, when, and how to disclose generative AI technology to users.

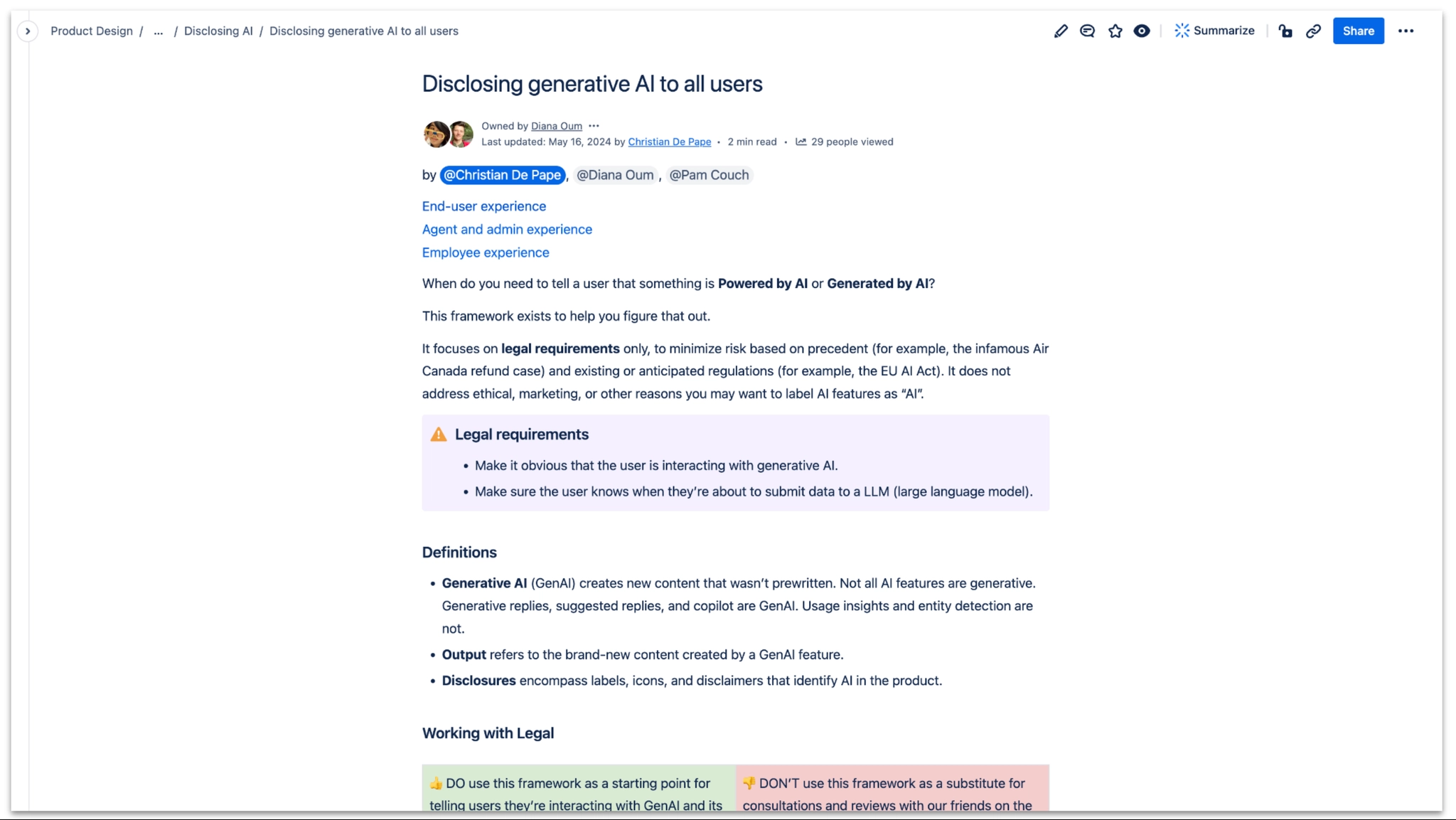

The guide, published to Confluence — covering definitions, legal requirements, and worked examples for both end-user and agent/admin experiences.

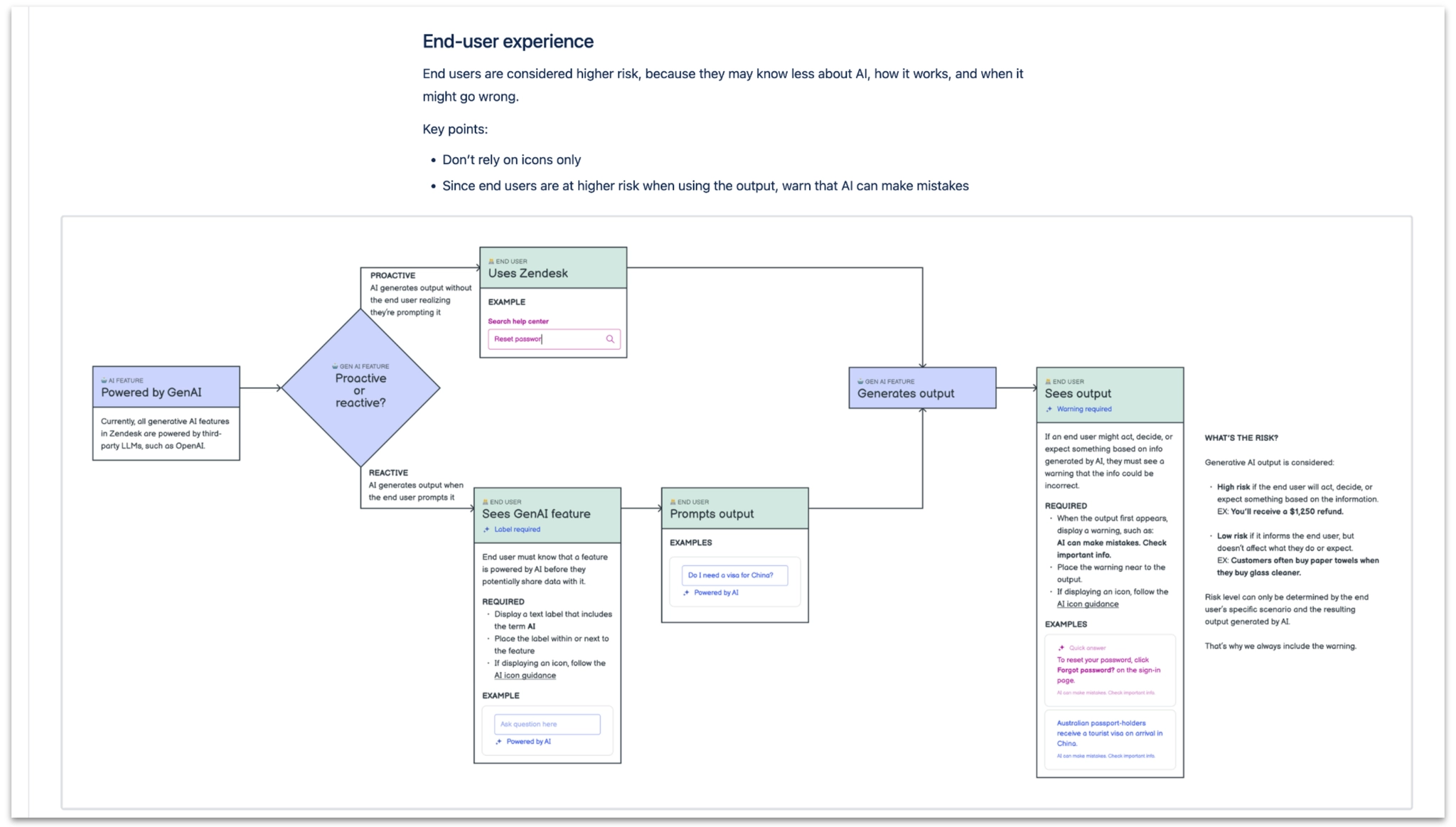

End-user experience: a decision tree helping designers determine when and how to disclose AI to end users, who are considered higher risk because they may be less familiar with the technology.

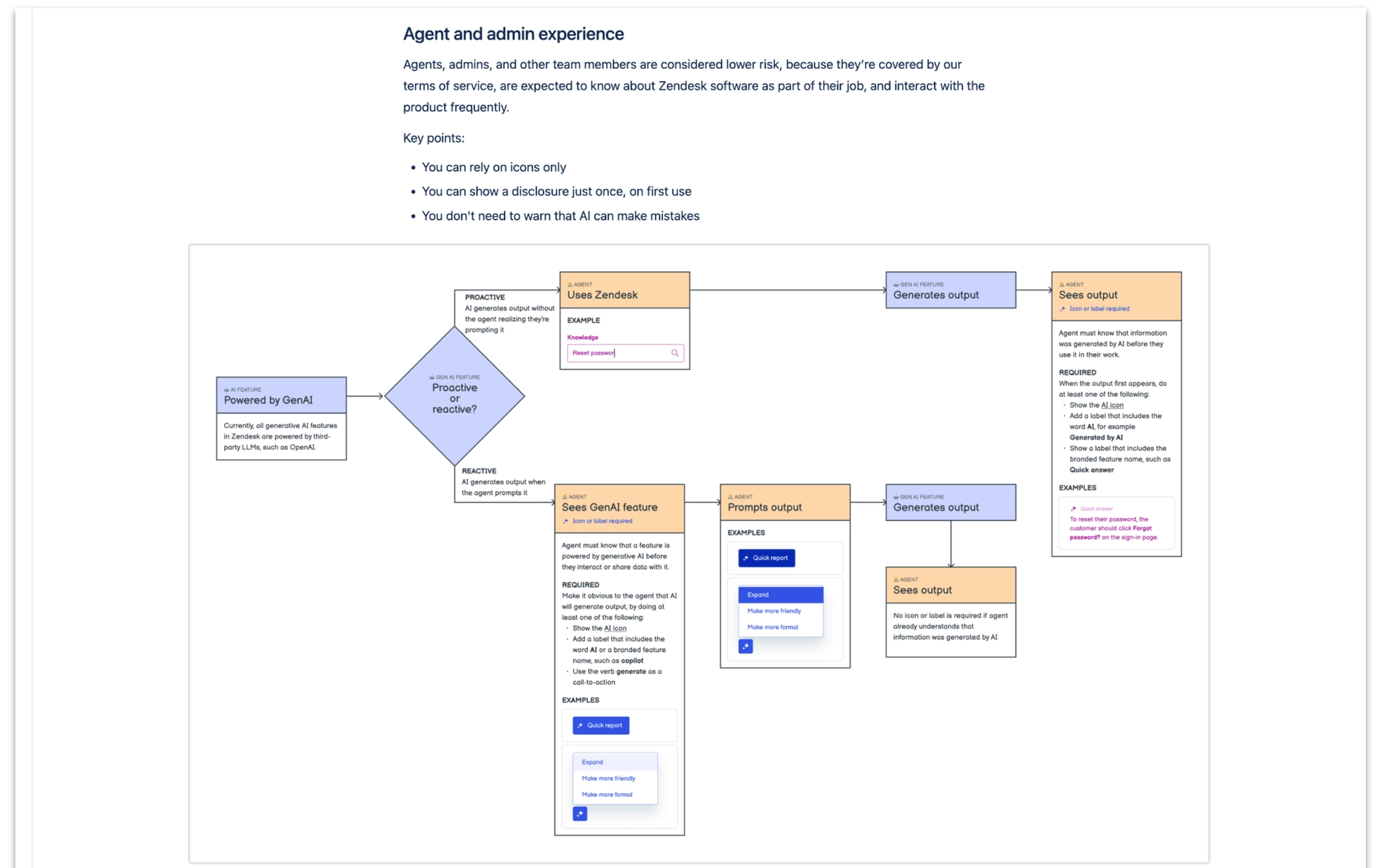

Agent and admin experience: a separate decision tree for support agents and admins, who are considered lower risk because they interact with Zendesk software as part of their job.